Eco-Friendly AI

Gray Whale is the world's first truly eco-friendly AI. While the industry races toward more tokens and massive cloud server farms, our Large Interaction Model (LIM) operates at 500 times lower energy and data cost than any other inference technique.

The Problem: The Token Trap

Current cloud AI is held back by extreme costs and a massive environmental footprint. Traditional coding agents react instead of reasoning through a sequence. To compensate, they load excessive data into the context window each round, leading to:

- Massive token spend and energy waste.

- Increased potential for faulty reasoning and hallucinations.

- Dependence on power-hungry, centralized infrastructure.

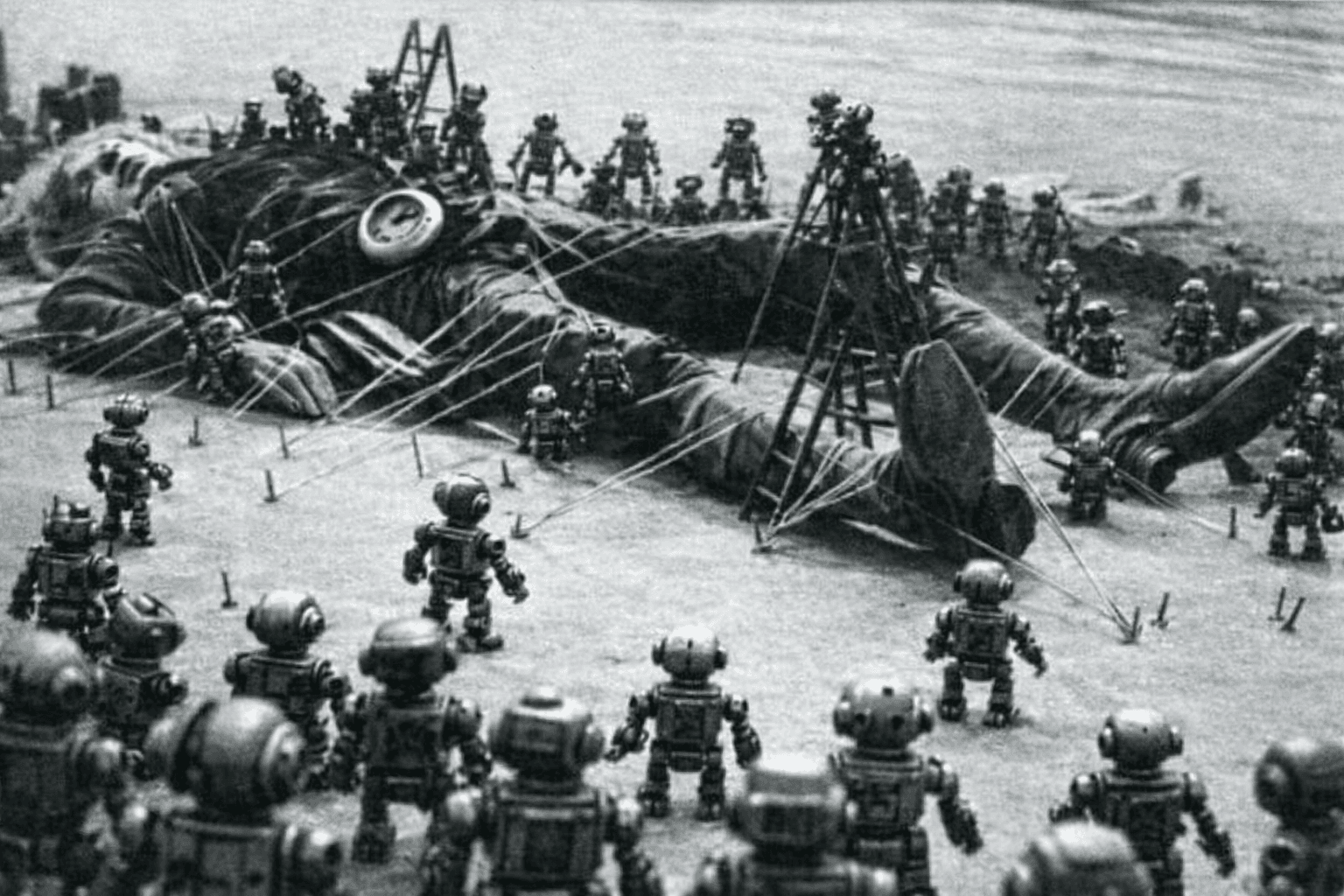

Traditional cloud AI is like a giant being tied down by hundreds of tiny robots—cumbersome, resource-heavy, and unsustainable.

The Core: Large Interaction Model (LIM)

The Large Interaction Model is not "better RAG." RAG makes your LLM smarter, but it doesn't make it cheaper. The LIM removes the LLM from the loop entirely for ranking, selection, and personalization tasks.

The LIM is a reasoning model trained on your dataset. It returns decisions, not generated text. Because there is no LLM generation step, there is no token burn and no possibility of hallucination.

The LIM is like a grandmaster playing chess—it is about strategy and reasoning multiple steps ahead, not just reacting to the last move.

Proven by Results

Gray Whale provides a predictable, cost-effective method of session-based AI interactions that simply works. Our technology is not a concept—it is a proven approach to machine learning with a track record of high-impact performance.

Empirical Evidence

- 36% Average Revenue Lift: Across millions of shopper sessions and 25 diverse brands, controlled A/B testing confirmed a massive lift in conversion and revenue performance.

- Learning "Faster than the Speed of Statistics": The LIM learns significant improvements that drive results in less than a dozen interactions, making it the most sample-efficient reasoning engine available.

- Zero Third-Party Data Reliance: By learning in real-time as users interact, we eliminate the need for historical harvesting or privacy-invasive third-party data tracking.

- Battle-Tested Infrastructure: Derived from $35 million in DARPA R&D, the LIM architecture currently serves over 15 million monthly users and has processed half a billion interactions to date.

AI You Can Budget For

- Session Economics: $0.04 per session (~10 interactions).

- Predictable: CFOs can enter session volume and get a line item they can actually budget for.

- Hallucination-Free: Decisions are reasoned natively from your Intelligent Data.

Real-World Impact

- Massive Cost Savings: Teams using expensive cloud plans can cut their costs in half—or use twice as much AI.

- 10x Code Quality: Our reasoning-first architecture increases average code quality by a factor of 10.

- Empowering Local Open Source: Local, free models can finally begin to rival massive frontier cloud models through LIM-backed efficiency.

- Zero Custom RAG: Completely removes the need to train energy-intensive custom RAG models for decision-based use cases.